When crypto meets philosophy

On FTX, fraud, and normative ethics

If you haven’t been on the internet recently, one of cryptocurrency’s biggest companies, FTX, has just lost all its value and at least $1 billion in customer funds. FTX is currently under investigation from the SEC and DOJ. It looks extremely likely that FTX and its CEO, Sam Bankman-Fried, acted unethically by mishandling customer funds.

I leave crypto and the law to more knowledgeable people. What I want to explore here is the age-old question of “do the ends justify the means?”

Bankman-Fried has been one of the major funders in effective altruism (EA). Put simply, the EA movement aims at doing the most good with the resources one has. Bankman-Fried, a “homegrown” EA billionaire, had purportedly made his money in order to give it away (it’s unclear from recent comments how true this was). His foundation says it has given $190 million away (his philanthropic fund team all resigned last week), and he’d thus become one of the faces of the EA movement.

I first heard about EA in college, but ended up learning more about it in about 2018 while working in Zambia. My organization was working to figure out how cost-effective it was to give money to women in Northern Nigeria to vaccinate their children. I thought this was great and still do – I believe a world with more vaccinated babies is a better world, that we should vaccinate babies cost-effectively, and that vaccinating babies is a much better use of my money than probably anything I’m spending it on now.1

But EA’s idea of “doing the most good” has always raised ethical questions. One of the first EA articles I read in Zambia was on whether or not to take a harmful career if you could spend the money earned in this career on something good (their verdict: usually not). EA has always been a movement comprised mostly of consequentialist (see below) and consequentialist-leaning people; something I, as a not-exactly-consequentialist, found interesting about this article was that it brought up the diverse ethics views of philosophers as evidence that EA should take different values systems seriously.

Now that FTX has imploded, discussion of moral philosophy, values pluralism, and fraud has been rampant among the EA movement and anyone who wants to weigh in on FTX. How could Bankman-Fried go so wrong? Was this means justification taken to its logical conclusion? Overall, it raises a lot of questions for the EA movement and justifying the means more broadly.

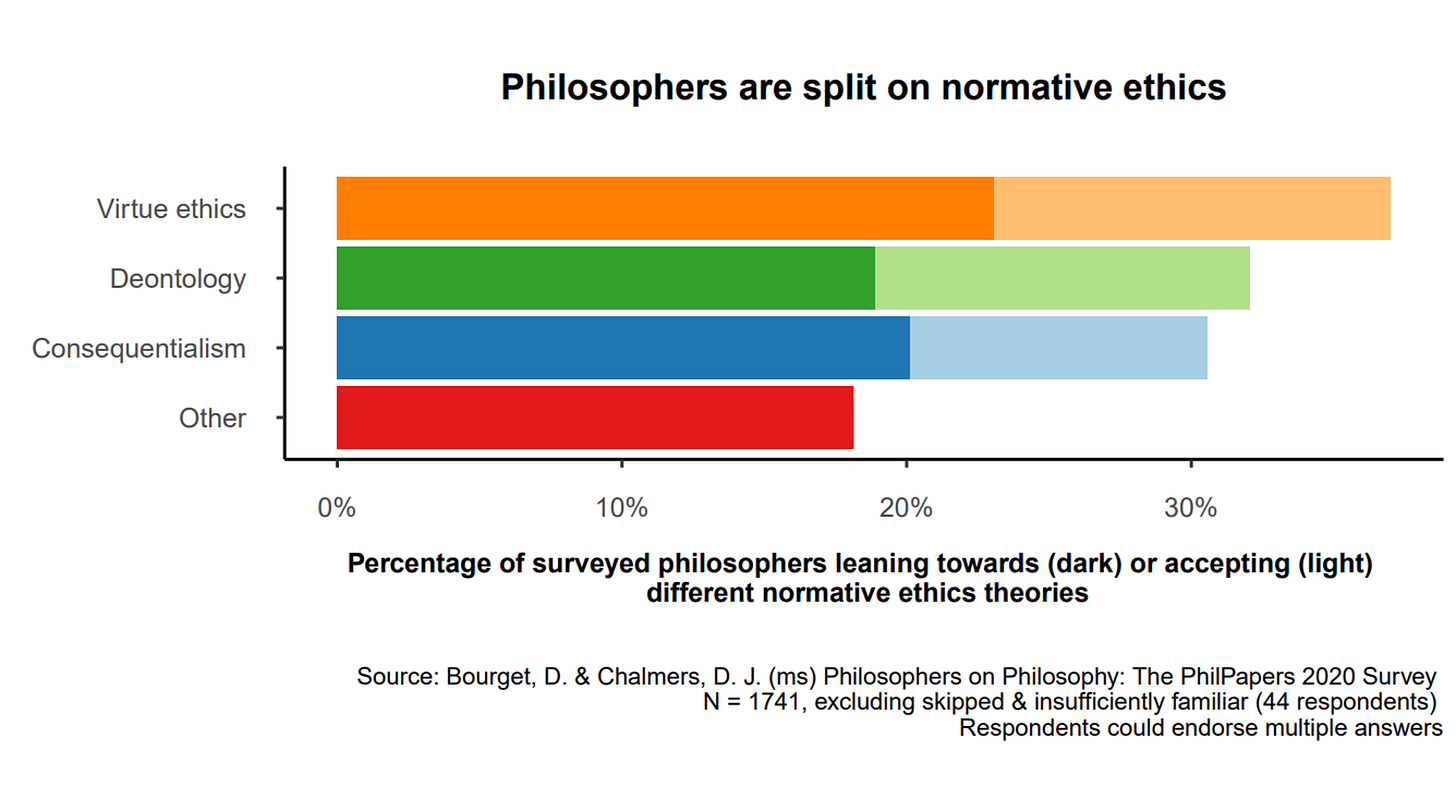

This discourse made me wonder what the philosophers were up to, so I went back to that article I’d read in 2018 and looked at its survey of philosophers. And I found out, according to the 2020 PhilPapers survey by David Bourget and David Chalmers, that philosophers still have varied views on how people should think about ethical decisions.

So what does this chart mean?

Normative ethics is the part of moral philosophy that talks about whether the actions you take in real life are right or wrong. There are three broad categories of Western normative ethics: deontology, consequentialism, and virtue ethics.

Deontology is the idea that we should act morally because, well, we should. Morality rests on our duties and obligations to do what is right.

While deontology is part of a lot of religions and philosophies (e.g., believing you should do something because God or scriptures tell you so) one of the most well-known formulations is Immanuel Kant’s.

Kant says we should only act in a way that we'd want it to become a universal law. In other words, before you act, ask yourself whether the world would be a good place if everyone in the world acted the way you did.

Strict Kantian deontology would not be okay with defrauding people in any circumstance, because if everyone was defrauding everyone all the time the world would cease to function well. “Fraud” is not a good universal law.2

Consequentialism focuses on the consequences of our actions: an action is good if its consequences are good.3

The most common form of consequentialism is utilitarianism, which sees the good as happiness and pleasure. Under utilitarianism, acting morally is acting in a way that maximizes the pleasure in the world.

Strict consequentialism/utilitarianism is the "end justifies the means" philosophy in which it’s good to defraud people if it leads to greater good. In practice, though, a lot of utilitarians don’t actually believe this because you could get caught, which very likely has worse expected consequences from the breaking of a norm. See this post on “prudent” vs “naïve” utilitarianism.

Bankman-Fried has agreed to being “a fairly pure Benthamite utilitarian,” and effective altruism is pretty steeped in utilitarian philosophy. People within the EA movement are, according to a 2019 survey, much more consequentialist than this group of surveyed philosophers.

Virtue ethics centers around becoming a good, or virtuous, person. Basically, if you become a person of good character, you’ll have the wisdom to do the right thing in any given situation. As with deontology, even if the consequences of your virtuous actions don’t end up being good, you’ve still acted virtuously.

Under virtue ethics, fraud is probably not good: a virtuous person is not the sort of person that defrauds people. I really like virtue ethics and probably find it the most useful way to think about ethics in my personal life, but it's admittedly vague compared to having a duty-based edict or cost-benefit analysis.

As the chart shows, philosophers in mostly high-income, largely English-speaking countries (the number of philosophers in this survey who came from the Global South was 10 percent at most) are almost equally split on which is the best normative ethics. So while about a third of philosophers might (to some extent) agree with “the ends justify the means”, most wouldn’t.

I’m a bit sad I’m limited to philosophers here. Most of us aren’t thinking about deontology all day, but we all have to make ethical decisions. The ethics of mothers in Northern Nigeria are important, but I don’t know what they are. When I looked up population surveys on this I couldn’t find any – who but the most pedantic of us even know what deontology is? — but my guess would be that ethical systems would be different from this chart because most people worldwide are religious and most of these philosophers are not.

Maybe Bankman-Fried aimed to do something good, maybe he didn’t. From a strict utilitarian perspective, though, if Bankman-Fried ended up doing net good with this money, it might’ve still been worth it. (Most) deontologists or virtue ethicists would say no, if he defrauded thousands of people of their money, it was not.

I hope this whole, awful saga makes the EA movement filter against any “ends justify the means” attitude – and not just claim to account for other moral concerns because that’s the most utilitarian thing to do in a PR crisis! I do think most people in EA I’ve met are trying to be ethical— even when our conclusions wildly differ — and I think there’s scope for the movement to adjust its ideology and practice to more seriously account for different ethical systems.

Intuitively, fraud is bad, and most people don't need a grand framework to see that. On a personal level, none of us are dealing with Bankman-Fried’s level of power and wealth. But we all have to make moral tradeoffs — whether to take a job, give or accept money, lie or stay silent — every day. Philosophers (and everyone else) have disagreed on when the ends might justify the means for years. Maybe all we can do is, in humility, try the best we can.

Edited by Surbhi Bharadwaj and Quincy Cason

Vaccinating kids isn’t the core of the EA movement anymore, which has become more focused on biorisks and AI. 80000 hours, the EA career advice website, doesn’t list global health as one of their most pressing world problems. People inside and outside of EA disagree on what a good world now and in the future would look like. The question of what good ends are for humanity is beyond the scope of this post, although I hope to write a more focused discussion on longtermism in the future. Here, I’m more interested in the means by which you’re willing to get to whatever ends you think are good.

In a lot of the discourse people have been assuming deontology = Kant, but it doesn’t need to be! You could feasibly make a non-Kantian rights-centered deontological argument that it’s morally permissible to, for example, steal from/lie to the rich and give to the poor.

There are many ways this can go depending on what consequences you think are important/good.

There is a difference between evaluating future actions vs past actions. Obviously it's hard to know what the consequences of any action is but it's reasonable to draw a limit at some point given counterfactuals will dominate the long term. Clearly by a consequentialist standard looking at SBF's past actions they are not justified and Matt Yglesias made a good point that even in the future the calculations are bad (ignoring the fraud part) https://www.slowboring.com/p/some-thoughts-on-the-ftx-implosion.

"But when you push this up into the range of billions of dollars and are talking about grant-making and political influence, it doesn’t make sense. The whole calculus is based on the idea that the volume of need is so large that when it comes to helping the global poor, you don’t face diminishing marginal returns in the relevant financial range. But there clearly are diminishing returns in grant-making, community-building, and political advocacy. And the instability itself becomes costly. People don’t want to join projects that are likely to vanish without warning, and the vanishing itself leaves resources stranded and wasted. I was confused when SBF explained this to me and kind of thought it was an end-of-the-bar bluster despite the lack of alcohol, but it was the actual official doctrine."

As an also EA adjacent but not EA person I hope that this incident causes some reflection on utility calculation and earning to give.

Finally I don't know why Philosophers don't generally answer that the correct moral ethics is a combination of different ethics systems. My view is that an action should be measured by approximately the floor of intentions and consequences (my actual view is a more complicated calculation to handle good intentions with bad consequences being better than bad intentions with bad consequences but its close enough).